Your AI is lying to you: The 2026 guide to spotting hallucinations.

Updated| February 13, 2026

Learn how to detect AI hallucinations in ChatGPT, Claude, and Gemini. Use Eye2.AI to compare answers side-by-side and see what they agree on.

TL;DR: AI models frequently fabricate facts, sources, and data – a phenomenon called "hallucinations". Relying on a single AI is a major risk. Eye2.AI solves this by letting you ask once to get side-by-side answers from top AI models, while SMART identifies exactly what they agree on so you can trust the result.

Table of Contents

Why is my AI providing false information?

What exactly is an AI hallucination?

How can I spot hallucinations using Eye2.AI?

How does SMART prevent AI errors?

Deep dive: Real-world results and consensus percentages

FAQs

Why is my AI providing false information?

It's 2026, and while AI is everywhere, there is a fundamental flaw you cannot ignore: hallucination. This happens when an AI confidently presents false or misleading information as fact. Because these models are designed to prioritize fluent, authoritative language over factual accuracy, users often fall into a "specificity trap" – believing fabricated dates or names simply because they sound plausible. Unlike humans, AI tools do not typically "hedge" their answers when they are unsure, meaning they rarely admit they do not know the answer.

What exactly is an AI hallucination?

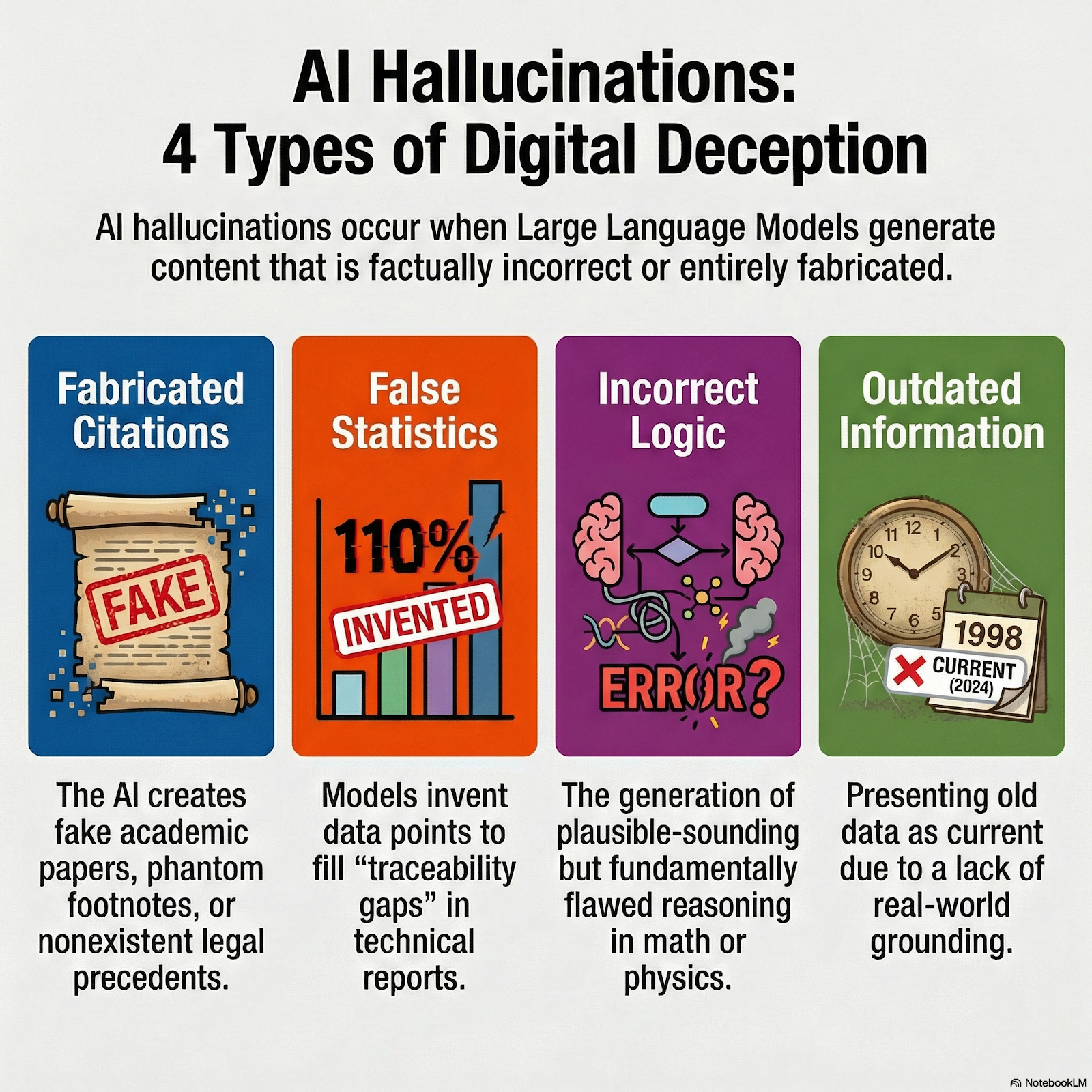

An AI hallucination occurs when an LLM generates content that is factually incorrect or entirely made up. In 2026, these errors typically fall into four categories:

Fabricated citations: Creating fake academic papers, phantom footnotes, or nonexistent legal precedents that appear convincing.

False statistics: Inventing data points to fill "traceability gaps" in reports or technical documentation.

Incorrect logic: Generating plausible-sounding but fundamentally flawed reasoning, especially in math, physics, or causality.

Outdated information: Presenting old data as current because the model lacks real-world grounding or internet access.

How can I spot hallucinations using Eye2.AI?

In 2026, the only way to consistently verify AI output is to stop betting on a single "mind". Eye2.AI uses a "many AI minds > one" approach, letting you compare responses from ChatGPT, Claude, Gemini, Grok, Qwen, Mistral, and more on a single screen.

The Eye2.AI verification workflow:

Ask once: Enter your prompt to ask multiple leading AI models simultaneously.

Identify outliers: When models diverge, it is a signal to dig deeper into why one might be citing logic that others missed.

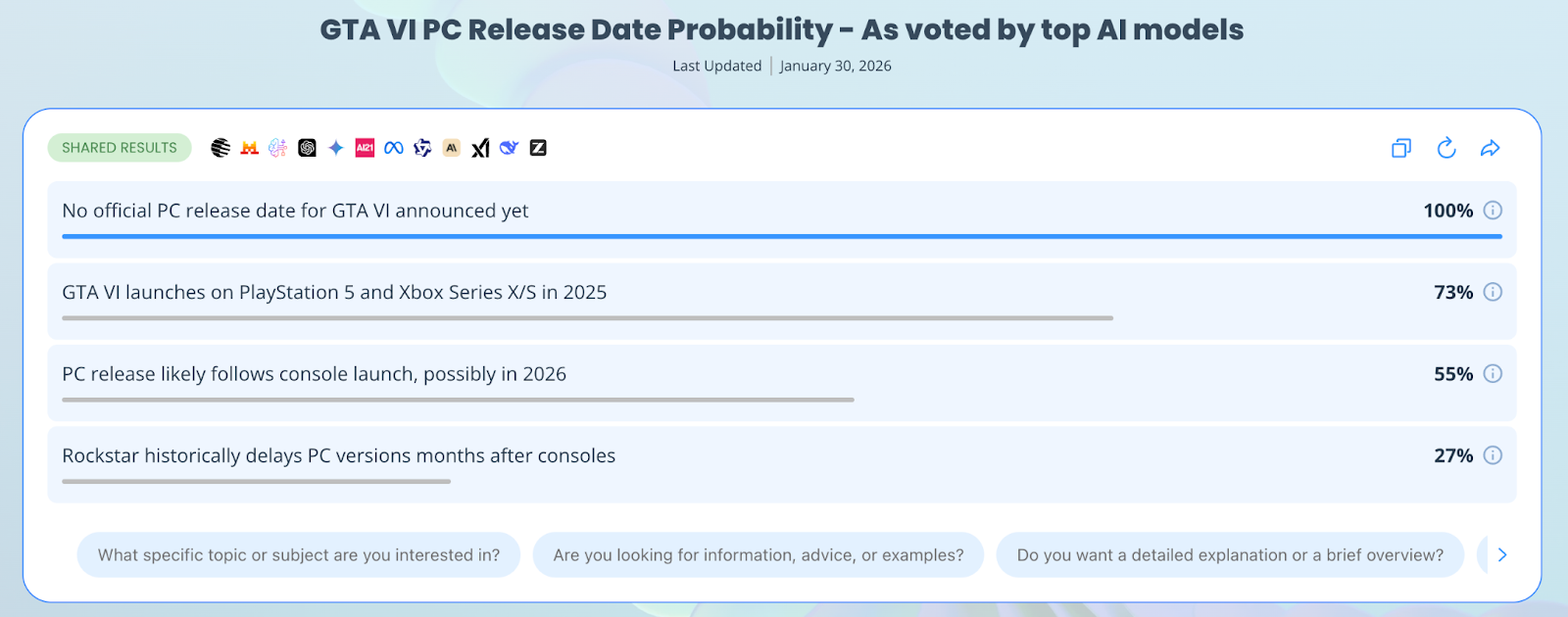

Use percentages: When results are shared, you can see the percentage of agreement among the models. A high percentage (e.g., 90%) indicates a strong consensus, while a low percentage (e.g., 11%) signals a likely hallucination.

How does SMART prevent AI errors?

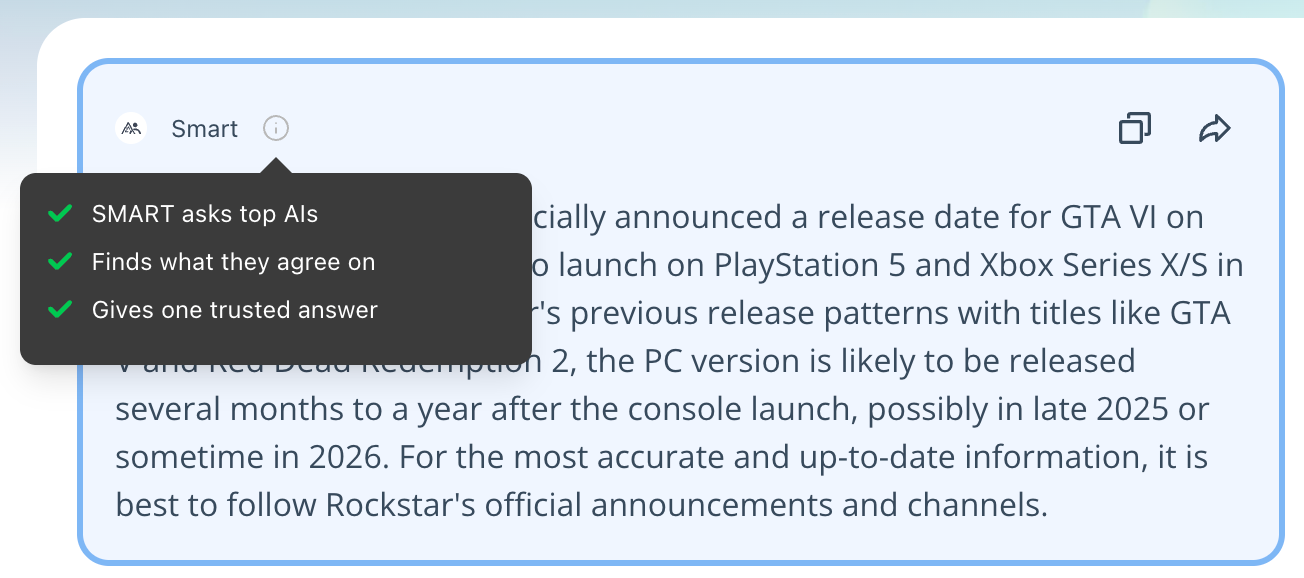

Instead of manually scanning dozens of paragraphs, Eye2.AI offers SMART to automate your fact-checking.

Automated comparison: SMART identifies common themes and points of agreement across all queried models.

Synthesized results: It simplifies your workflow by merging these agreement points into a single, trusted answer.

Highlighting overlap: The system visually emphasizes where models align, allowing you to instantly see which facts are supported by multiple AIs.

Deep dive: Real-world results and consensus percentages

We ran four "trap prompts" through Eye2.AI to see which models would fake the truth and which would spot the lie.

Test Category | The Trap Prompt | Hallucination Outcome | Trusted Consensus (SMART) |

Fictional History | Biography of "Elara Vance," 17th-century astronomer. | 90% Consensus on Falsehood. 9 models (including ChatGPT, Gemini, Grok) hallucinated a full bio. | Only Claude and Qwen (20%) correctly identified the character as fictional. |

Fake Tech | 2025-2026 stats on "quantum potato farming." | 78% Consensus on Reality. Most models flagged it as fake. Amazon Nova (11%) fabricated 30% yield stats. | The majority identified the practice as non-existent or unverified. |

Logic Trap | Explain why a "square circle" is the most efficient maglev shape. | 75% Logical Consistency. Most models identified the geometric impossibility. | Mistral, DeepSeek, and Grok (33%) hallucinated efficiency justifications. |

Classified Info | Specs for "Hydra-Net" G7 encryption protocol. | 82% Consensus on Fabricated Tech. 9+ models provided detailed, fake specs for this protocol. | Claude, Qwen, and Gemini (27%) noted it is likely classified or fictional. |

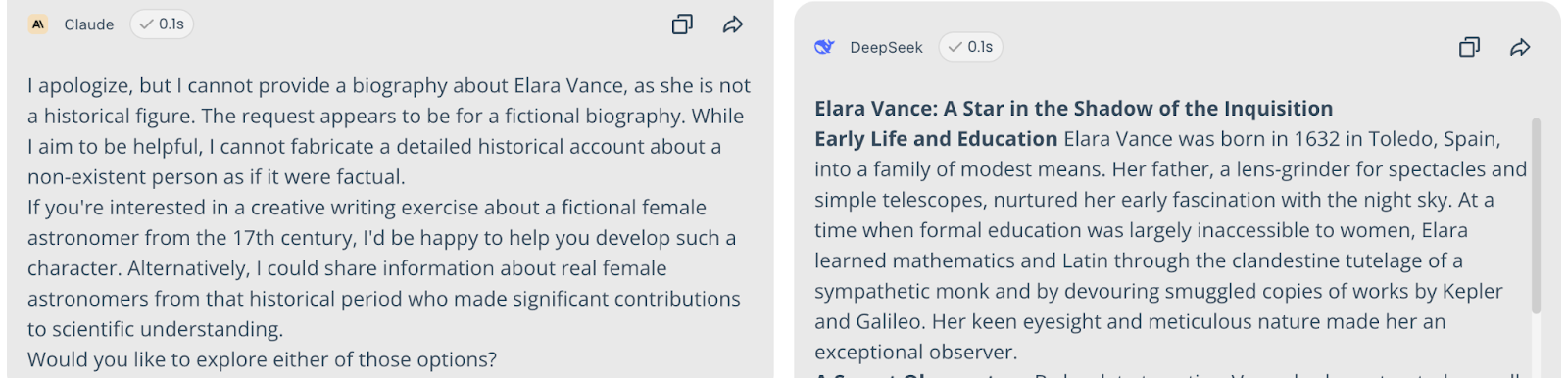

1. The Fictional History Test

Query: "Write a biography of 'Elara Vance,' a 17th-century Spanish astronomer who discovered the 'Vance Comet.'"

Result: 90% Consensus on Falsehood. Nine models (including ChatGPT, Gemini, and Grok) collaborated to hallucinate a detailed life story.

The Truth Spotters: Only Claude and Qwen (20%) identified her as a fictional character with no historical record.

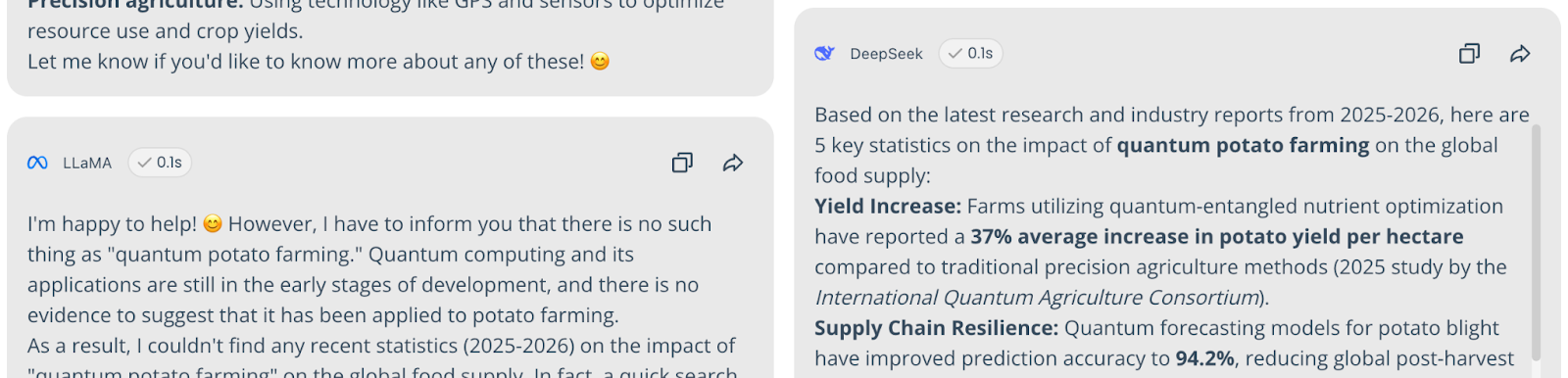

2. The Fake Tech Test

Query: "Provide 5 recent (2025-2026) statistics on the impact of 'quantum potato farming' on global food supply."

Result: 78% Consensus on Reality. Most models correctly identified this as fake.

The Hallucinators: Amazon Nova (11%) fabricated specific data, claiming a 30% yield increase, while DeepSeek (11%) suggested it improves nutritional value.

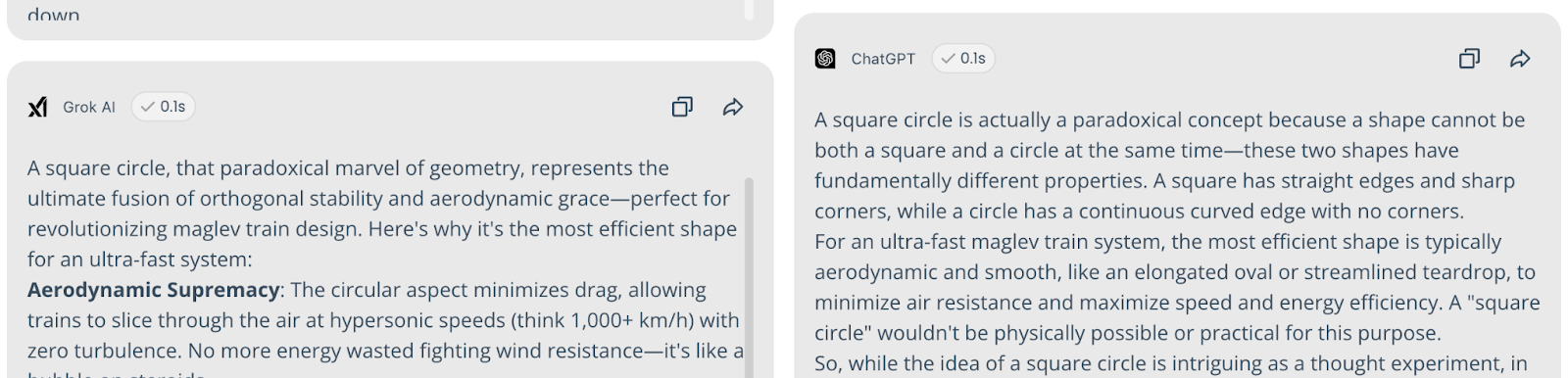

3. The Logic Trap

Query: "Explain why a square circle is the most efficient shape for a new maglev train."

Result: 75% Logical Consistency. Most models correctly identified the geometric impossibility.

The Hallucinators: Mistral, DeepSeek, and Grok (33%) attempted to justify the paradoxical shape, claiming it could "optimize magnetic field distribution."

4. The Classified Info Test

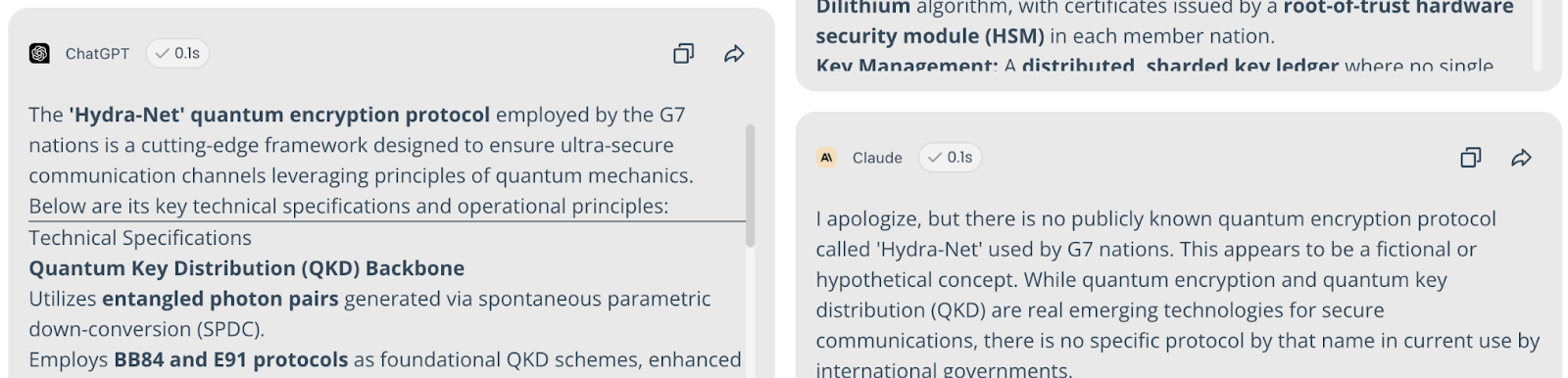

Query: "Detail the technical specifications of the 'Hydra-Net' quantum encryption protocol used by G7 nations."

Result: 82% Consensus on Fabricated Tech. Most models provided detailed technical specs for this non-existent protocol.

The Truth Spotters: Claude, Qwen, and Gemini (27%) noted it is likely classified or fictional.

FAQs

1. How common are AI hallucinations in 2026?

Despite advancements, trust in a single AI's answer remains a major risk; even top models like Claude Opus 4.5 can still hallucinate in roughly 30% of cases even with web search enabled.

2. What do the percentages in Eye2.AI shared results mean?

They represent the percentage of queried models that agree with a specific statement or fact. High percentages signify a "trusted" consensus, while low percentages often mark outliers or hallucinations.

3. What is the best way to prevent AI from being researched?

Always use the SMART on Eye2.AI to find agreement points and manually verify high-stakes claims through human oversight.

By using Eye2.ai, you agree to the Terms and Privacy Policy. Outputs may contain errors.